How continuous testing brought our clients a 40% increase in conversions

A key component of mobile app marketing is testing. Testing helps us define what works and what doesn’t, as we gather data and evidence in support of strategic changes. At Mobtimizers we continuously test to achieve data-driven results for our clients as part of our App Store Optimization strategy. Watch Appfigures interview Mobtimizers on tips for ASO strategies here.

What is A/B testing?

A/B testing for mobile apps is a method of comparing two or more versions of an app (e.g., a ‘Control’ vs ‘Treatment A’ and/or ‘Treatment B’) to determine which one performs better. This type of testing is used to optimize the user experience and improve the performance of the app.

App Store Optimization follows the same principles. The testing is performed in the app stores and is often focused on specific elements of the app store presence, such as the title, short description, screenshot, icon, or CTA.

Based on the results of the test, the app can be updated to include the elements that were found to be more effective.

What are the guidelines on how to run A/B testing:

There are basic steps on conducting an A/B test for mobile apps. They typically involve the following:

Define the goals of the test: Determine what aspect of the app you want to test and what specific metrics you will use to measure the success of the test. Conduct market research to guide your asset creation and testing choices.

Create the variations: Create assets of what you are going to test, treatment A and treatment B. Ideally other elements in the mobile app remain the same, so you get a clear picture of what is driving downloads and conversions.

Implement (or publish) the test: Publish both app store treatments, in Google Play Store (Experiments) or Apple Store (Product Page Optimization). Depending on your app’s volume, give your experiment at least one week to run to collect enough data. If your test is targeting a market with low install volume, you might have to run the test for multiple weeks.

Analyze the results: Compare the data collected from the two subgroups to determine which assets that you are testing for your app performed better. Based on the results of the test, decide which version of the app to implement.

Continuously test and improve: A/B testing should be an ongoing process to continuously improve your mobile app.

Below is an example of how Mobtimizers helped our client achieve almost 40% increase in conversion rate over 5 months of continuous testing.

What are A/B testing best practices?

Aside from the basic steps above, there are best practices for A/B testing that can help ensure the accuracy and reliability of the results.

It is important to have clearly defined goals. Have a clear understanding of what you want to achieve with the test, and define specific metrics to measure success.

In most cases, it is best to test one variable at a time, such as the icon, long description, or CTA, to be able to clearly identify the effect of the change. Keep the test running for a sufficient period of time to gather enough data to make a meaningful comparison.

Make sure to use a proper statistical analysis to determine if the results are statistically significant. There are tools to help with experiments and you can read more about them below.

A/B testing is an ongoing process, and it is important to continuously test and improve the app based on the results of previous tests.

There are many tools available to help with A/B testing for mobile apps, such as Optimizely, Mixpanel, and Google Analytics. These tools can help automate the testing process, making it easier to conduct, analyze, and implement the results of the tests.

Does A/B testing differ for apps and mobile? If so, how?

A/B testing for mobile apps and A/B testing for web apps are similar in many ways, but there are some key differences:

Platform: A/B testing for mobile apps is done on mobile devices, while A/B testing for web apps is done on desktop or laptop computers. This means that mobile apps have to take into account the different screen sizes and resolutions of different devices, as well as the different operating systems and browsers that are used to access web apps.

User behavior: Mobile apps are often used on the go, which means that users may be more likely to use them in short bursts of time. Web apps are typically used on desktop or laptop computers, which means that users are more likely to use them for longer periods of time. This can affect how users interact with the app, and how long they spend on different parts of the app.

Interaction: Mobile apps are often designed for touch-based interactions, such as swiping and tapping, while web apps are typically designed for mouse-based interactions, such as clicking and hovering. This can affect how users interact with the app, and how intuitive the app is to use.

Navigation: Mobile apps often use a bottom navigation bar and web apps use a top navigation bar, this design choice affects the way users navigate and interact with the app.

Tools: There are different tools and frameworks available for A/B testing mobile apps and web apps. For mobile apps, tools such as Firebase Remote Config, Leanplum, and Apptimize can be used. For web apps, tools such as Google Optimize and Visual Website Optimizer can be used. Adobe Target and Optimizely can be used for both mobile and web apps.

Overall, A/B testing for mobile apps and web apps is similar in many ways, but the differences in platform, user behavior, interaction and navigation design should be considered when planning, running, and analyzing the results of an A/B test.

Are there other testing options besides A/B testing?

Yes, there are other testing options besides A/B testing for mobile apps:

Multivariate testing: This type of testing involves testing multiple variables at the same time, rather than testing one variable at a time like in A/B testing. This can provide more detailed insights into how different elements of the app interact with one another.

Beta testing: Beta testing is an early form of testing in which the app is made available to a group of users who test the app and provide feedback. This can help identify bugs and usability issues before the app is released to the general public.

Usability testing: This type of testing is focused on evaluating the usability of the app. It involves having users complete specific tasks while researchers observe and collect data on how the users interact with the app.

User acceptance testing: This type of testing is done to confirm that the app meets the business and technical requirements that guided its design and development.

These are just a few examples of the different types of testing that can be used. The choice of testing method will depend on the specific goals of the test, as well as the stage of development of the app.

What are popular tools to use for A/B testing?

Firebase Remote Config: This is a tool from Google that allows developers to make real-time changes to their apps without requiring an app update. It can be used for A/B testing and allows developers to target different groups of users with different variations of the app.

Leanplum: This is a mobile marketing platform that includes A/B testing functionality. It allows developers to test different variations of an app and target different groups of users.

Apptimize: This is a mobile optimization platform that allows developers to test different variations of an app and target different groups of users.

Taplytics: This is a mobile optimization platform that provides A/B testing, feature flagging, and analytics capabilities.

Appcelerator: This is a mobile development platform that includes A/B testing and analytics capabilities.

Splitforce: This is a mobile optimization platform that allows developers to test different variations of an app and target different groups of users.

Splitmetrics: This is a mobile optimization platform that allows developers to test different variations of an app, such as app store listing, icons, screenshots and more.

These are just a few examples of the many A/B testing tools available for mobile apps. The choice of tool will depend on the specific needs of your app and the goals of your test, so be sure to evaluate the features and capabilities of each tool before making a decision.

Surprising insights we learned from running A/B and multivariate testing

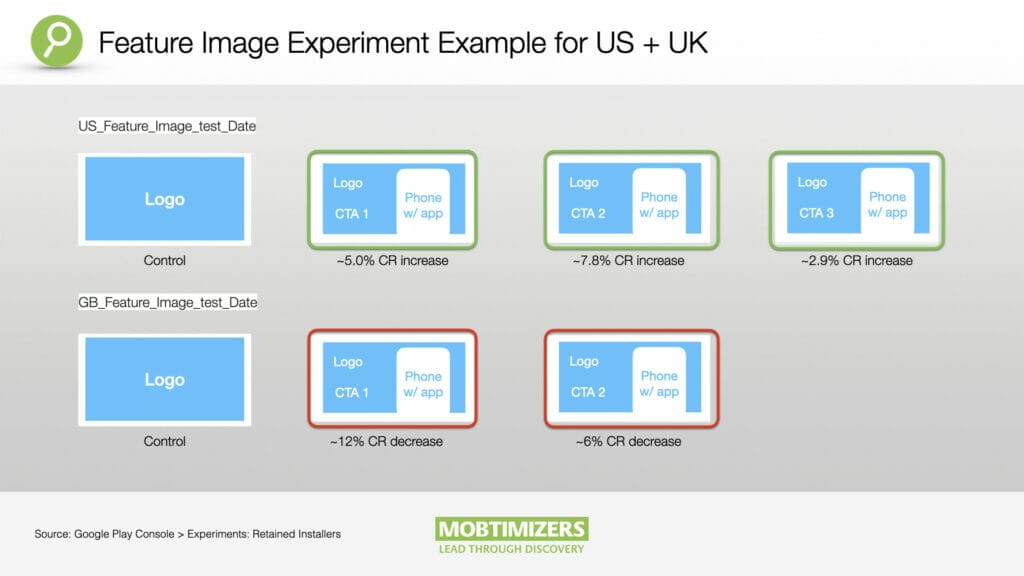

Below is an example of a Google Play feature image. It shows two very different conversion rates (CR) for the US versus UK. The client did not expect the CR to be so different, as assets were almost identical.

Social proof can help markets where the brand is not a leading player. The example below shows a 6.5% increase in “retained-installers” and 7.5% increase in “first-time-installers” on Google Play with a simple focus on “social proof” as a part of the CTA copy. Note that ‘Free + Fun’ in this example is the primary focus on the CTA, while adding in social proof (e.g. “Join 6 million other happy users”) uses a secondary CTA.

Restructuring your short descriptions can lead to positive results. In the example below, we took our best performing US short description and restructured the sentence. In the target market, we saw a 5.3% increase in retained first-time installers.

In conclusion

Running experiments is exploration. Not all captains of ships end up where they set out to go. The same goes for the art of running experiments in the app stores. You have to be ready to adapt your thinking, and maybe even your product, as you venture out on this journey. This is why Mobtimizers sees experimentation as an integral part of our digital strategy.

At Mobtimizers, we understand the value of experimentation in digital strategy, and we are dedicated to helping our clients navigate the waters of app store optimization through rigorous testing and analysis. The goal being to align our client needs, brand, and messaging, with the data and analysis we find from conducting market research and, more importantly, testing.

The key to growth is data, and finding the data to support strategic decisions. By conducting market research, A/B testing, and experiments, we can gain real insights that matter to users in real time. An integral part of ASO strategy is data, and strategic testing helps you find the results that matter.

Reach out to Mobtimizers today and learn more about how we can help grow your business with our strategic experimentation and ASO services.